🎼Music Intelligence — Project Status

A real-time look at the development of an adaptive learning system for music theory.

Updated periodically as development progresses.

What Music Intelligence Is

Music Intelligence is a SaaS-based educational platform being developed by Momentum Creative Technology Inc.

It is designed to improve how complex symbolic systems are learned—beginning with music theory—by shifting away from static lesson delivery toward responsive, behavior-aware instruction.

Rather than presenting fixed sequences of content, the system is being built to observe how a learner interacts and adjust instruction dynamically in response.

Why This Matters

Most digital learning systems follow a predefined path. They evaluate whether an answer is correct, but they do not meaningfully respond to how the learner arrived at that answer.

This leads to a common outcome:

students learn to recognize patterns, but not to understand them.

Music Intelligence is being designed to address this gap.

The system will monitor interaction patterns such as:

- response timing

- hesitation and repetition

- error structure

- correction behavior

The goal is to respond not just to correctness, but to thinking process—guiding the learner toward understanding rather than simple completion.

Current Development Status

Music Intelligence is currently in active prototype development, with multiple working components under continuous refinement.

The platform is evolving as a coordinated set of systems that together form the foundation of an adaptive learning environment.

Core Systems in Development

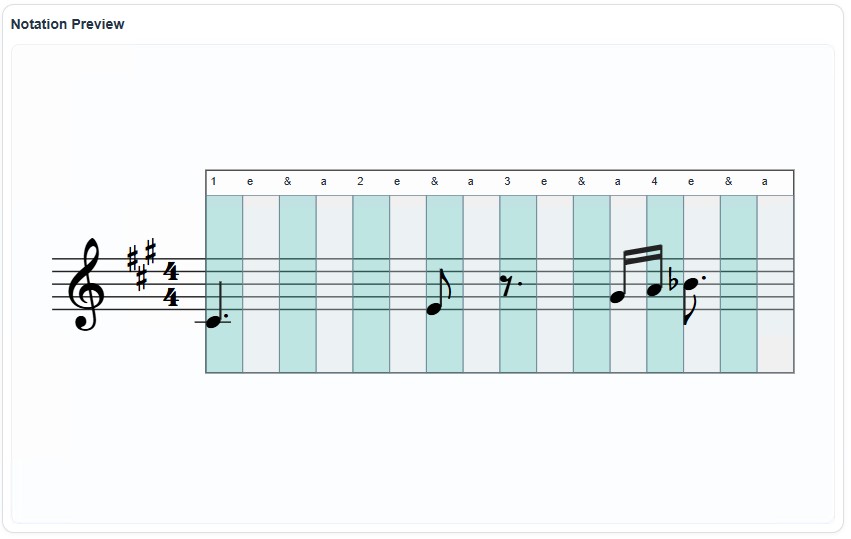

🎼 Notation Engine (MINE)

The Music Intelligence Notation Engine (MINE) is the core symbolic rendering system of the platform.

Example output from the Music Intelligence Notation Engine (MINE), demonstrating rhythmic alignment, beam grouping, dotted values, rests, and instructional timing overlay.

It is a browser-based engine that renders musical notation using SVG in combination with the SMuFL-compliant Bravura font.

Current capabilities include:

- Treble and Bass staff rendering (Grand staff in progress)

- Pitch-to-staff mapping with ledger lines

- Rhythm system supporting quarter through sixteenth note timing

- Stem, flag, and beam rendering

- JSON-driven score input (actively expanding)

- Duration overlay system for instructional rhythm visualization

The engine is functional and under active refinement, with ongoing work focused on spacing accuracy, alignment, and instructional clarity.

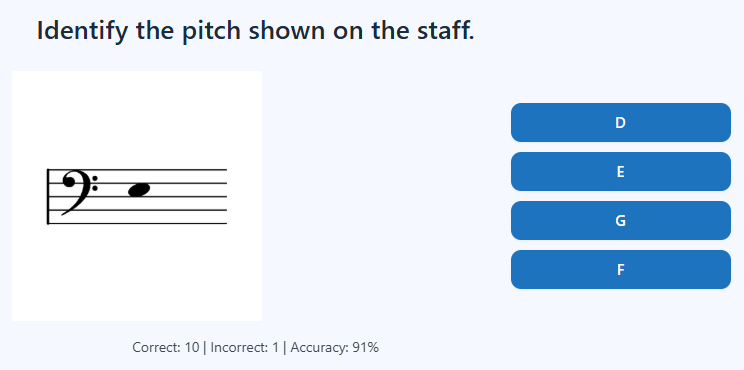

🖼️ Visual Learning Lab (Lab-1)

An image-based learning system is in place to support multiple-choice and recognition-based exercises.

Originally developed using individual notation symbols and single-note identification, this system has broader application potential, including:

- composer recognition

- instrument identification

- visual reinforcement of theoretical concepts

- non-notation-based instructional content

This lab establishes a flexible framework for visual learning beyond traditional notation.

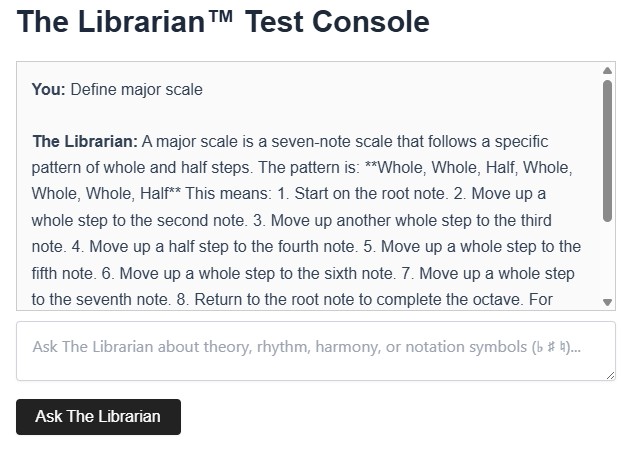

💬 Conversational Interface (Prototype)

An early-stage music-focused conversational system has been implemented and tested.

While not yet constrained for production use, it demonstrates the ability to:

- respond to music-related questions

- assist with conceptual understanding

- provide an additional layer of learner support

This component is intended to evolve into a guided instructional assistant integrated with the broader system.

🧠 Adaptive Learning Layer (In Development)

The adaptive system is currently being designed and integrated across all components.

The model includes:

- tracking of learner interaction patterns

- analysis of behavioral signals (latency, repetition, error types)

- dynamic adjustment of instructional flow and feedback

This layer is not yet fully implemented in a public-facing environment but is central to the platform’s long-term functionality.

Development Environment

All systems are currently developed and tested within a controlled lab environment.

This environment enables:

- detailed inspection of rendering behavior

- validation of rhythmic and spatial accuracy

- iterative development of lesson structures

- controlled testing of interaction patterns

The lab is intentionally not user-facing and exists to ensure stability and clarity before public release.

What Is Currently In Progress

Active development is focused on connecting these systems into a unified instructional experience.

Key areas of work include:

- JSON-driven lesson and display configuration

- Key signature and time signature integration

- Accidentals and dot duration logic refinement

- Grand staff dual-system rendering

- Alignment between notation and instructional overlays

- Integration of visual, symbolic, and conversational components

- Preparation of a simplified, user-facing lesson interface

What Comes Next

The next milestone is a minimal public release focused on basic sight-reading instruction.

This initial release will include:

- a clean, user-facing lesson page

- structured exercises using the notation engine

- basic interaction tracking

- early adaptive feedback behaviors

Following this stage, development will expand toward:

- user accounts and progress tracking

- AI-assisted instructional guidance

- deeper integration of the conversational system

- expansion into additional symbolic learning domains

Development Approach

Music Intelligence is being built deliberately, with emphasis on:

- clarity over complexity

- accuracy over speed

- interaction quality over feature volume

The platform is intended to function not as a content library, but as a structured, responsive guide.

Status Summary

Music Intelligence is an active, multi-system prototype with:

- a functional browser-based notation engine

- a working visual learning lab

- an early conversational interface

- a defined adaptive learning architecture

Core components are operational and are being integrated toward a first public instructional release.

Development is ongoing.